IEEE Transactions on Pattern Analysis and Machine Intelligence, IEEE CVPR 2018

RotationNet for Joint Object Categorization and Unsupervised Pose Estimation from Multi-View Images

Abstract

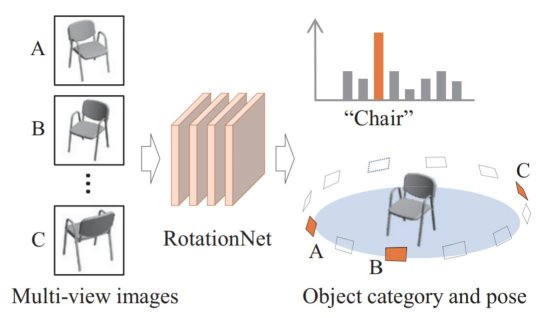

We propose a Convolutional Neural Network (CNN)-based model “RotationNet,” which takes multi-view images of an object as input and jointly estimates its pose and object category. Unlike previous approaches that use known viewpoint labels for training, our method treats the viewpoint labels as latent variables, which are learned in an unsupervised manner during the training using an unaligned object dataset. RotationNet uses only a partial set of multi-view images for inference, and this property makes it useful in practical scenarios where only partial views are available. Moreover, our pose alignment strategy enables one to obtain view-specific feature representations shared across classes, which is important to maintain high accuracy in both object categorization and pose estimation. Effectiveness of RotationNet is demonstrated by its superior performance to the state-of-the-art methods of 3D object classification on 10- and 40-class ModelNet datasets. We also show that RotationNet, even trained without known poses, achieves comparable performance to the state-of-the-art methods on an object pose estimation dataset. Furthermore, our object ranking method based on classification by RotationNet achieved the first prize in two tracks of the 3D Shape Retrieval Contest (SHREC) 2017. Finally, we demonstrate the performance of real-world applications of RotationNet trained with our newly created multi-view image dataset using a moving USB camera.

Publication

- Asako Kanezaki, Yasuyuki Matsushita, and Yoshifumi Nishida.

RotationNet for Joint Object Categorization and Unsupervised Pose Estimation from Multi-view Images.

IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI), Vol.43, Issue 1, pp.269-283, 2021.

https://doi.org/10.1109/TPAMI.2019.2922640

- Asako Kanezaki, Yasuyuki Matsushita, and Yoshifumi Nishida.

RotationNet: Joint Object Categorization and Pose Estimation Using Multiviews from Unsupervised Viewpoints.

IEEE Conference on Computer Vision and Pattern Recognition (CVPR), accepted, 2018.

PDF

Code

- rotationnet : caffe codes used for CVPR submission

- pytorch-rotationnet : a pytorch implementation

Dataset

Video

BibTeX

@article{kanezaki2021_rotationnet,

author={Asako Kanezaki and Yasuyuki Matsushita and Yoshifumi Nishida},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI)},

title={RotationNet for Joint Object Categorization and Unsupervised Pose Estimation from Multi-View Images},

year={2021},

volume={43},

number={1},

pages={269-283},

doi={10.1109/TPAMI.2019.2922640}}

@inproceedings{kanezaki2018_rotationnet,

title={RotationNet: Joint Object Categorization and Pose Estimation Using Multiviews from Unsupervised Viewpoints},

author={Asako Kanezaki and Yasuyuki Matsushita and Yoshifumi Nishida},

booktitle={Proceedings of IEEE International Conference on Computer Vision and Pattern Recognition (CVPR)},

year={2018},}